“This case could completely wipe out the ATF’s ability to create law and subvert congress, which would be a massive win for the Second Amendment.” [more…]

The hidden organ that may control how fast you age

(NaturalNews) Two landmark Nature studies analyzed CT scans from over 25,000 adults using artificial intelligence to measure thymic health Adults with the he…

U.S. Fertilizer Prices Return to Pre-Conflict Levels, But El Nino Threatens Food Inflation

(NaturalNews) Urea fertilizer prices in the United States have fallen 36% from their April peak and returned to levels seen before the U.S.-Iran conflict, according…

Study Links Pro-Inflammatory Diets to Higher Depression Risk, Especially in Women

(NaturalNews) A seven-year study of 3,740 older adults in Hong Kong found that those consuming pro-inflammatory diets had consistently higher levels of depressive s…

PepsiCo and Gatik Unleash Driverless Fleet: The Globalist Plan to Replace Truckers with AI-Powered Machines

(NaturalNews) PepsiCo and Gatik on Monday announced a multi-year strategic partnership that brings fully driverless heavy-duty trucks into commercial freight operat…

Pesticide Exposure Linked to Increased Risk of Childhood Leukemia and Brain Tumors, Review Finds

(NaturalNews) Overview of FindingsA comprehensive review of 88 studies spanning more than 40 years has linked pesticide exposure during pregnancy and early childh…

Court voids Trump’s $100,000 H-1B visa surcharge, calls it an illegal tax

(NaturalNews) A federal judge struck down Trump’s $100,000 H-1B visa fee, ruling it was an unlawful tax requiring congressional approval The sudden fee cause…

Iran’s nuclear breakthrough: What it means for global power dynamics

(NaturalNews) President Obama’s administration deliberately released $100 billion in frozen Iranian funds and orchestrated a false-flag drone capture to hand ov…

Green New Deal scammers fake being MAGA to defeat Chip Roy

Rep. Chip Roy (R-Texas) ran to replace Ken Paxton as Texas attorney general but lost in the Republican primary runoff late last month to state Sen. Mayes Middleton. Roy’s defeat was apparently achieved with help from a coalition of green-energy elites desperate to protect the gravy train that he threatened to derail in Congress.

One of the most full-throated celebrations of Roy’s defeat came from the Invest in Tomorrow Coalition PAC — a California-based political outfit committed to punishing lawmakers like Roy who’ve sought to deny federal subsidies to renewable energy giants.

‘This is political warfare.’

“Good riddance, Chip Roy,” the PAC, which spent $1.7 million to tank the Republican’s campaign, said in a statement on May 26.

“As leaders within the clean energy industry, ITC PAC is proud of our role in ending Chip Roy’s political career, investing nearly $1.5 million to reach GOP voters where they are — including on conservative cable, streaming sites like Rumble, and on MAGA social media — to remind them that Chip Roy betrayed their leader,” the group added.

As part of its subversive campaign, the PAC insinuated in MAGA voter-targeted messaging that it was supportive of President Donald Trump and aligned with conservatives but Roy was not.

An ad shared by the PAC to Truth Social in February, for instance, claimed that Roy — whose voting record the Conservative Review gave a 100% Liberty Score and Heritage Action gave a 98% lifetime score — was “not MAGA enough for Texas.”

RELATED: The REAL reason gas prices are so high (the federal tax is just the beginning)

Billionaire Chris Larsen. David Paul Morris/Bloomberg/Getty Images

The PAC was launched by Peter Davidson, the CEO of Aligned Climate Capital, while Brendan Bell, the COO of Aligned Climate Capital, is listed as the PAC’s treasurer. Both men previously worked for the Obama Department of Energy.

Davidson made no secret of why Roy was targeted.

“Not only did he and the Freedom Caucus have the whole rewind and sunsetting of the [tax credits] for solar and wind … but the whole demonization of the industry, the whole language of the ‘Green New Scam’ — all that came from the Freedom Caucus, and that came from Chip Roy,” Davidson told Politico.

Last year, for instance, Roy ruffled feathers in the “Green New Scam” industry by championing legislation with Rep. Josh Brecheen (R-Okla.) that would repeal over 20 green energy tax subsidies created or expanded by the Biden administration’s so-called Inflation Reduction Act, thereby saving taxpayers hundreds of billions of dollars.

“The Inflation Reduction Act, better known as the Green New Scam, is providing massive unlimited subsidies to billion-dollar corporations and Chinese manufacturers to the detriment of American energy freedom and dominance,” Roy said at the time.

“It is responsible for building ineffective, unattractive, and unwanted energy projects enriching paper investors over the objections of the people living in Texas communities I represent,” Roy continued. “These subsidies need to go away immediately.”

‘Attack clean energy and we’ll end your career just like Chip Roy’s.’

When the PAC took to social media to gloat about his defeat, Roy proved unshaken in his resolve, stating in response, “I didn’t just declare war — I led the charge to successfully crush the crony ‘green new scam’ grift. Happy to do it. Will do it again. And again. I’m just getting started.”

The biggest donor to the subversive climate PAC that targeted Roy is Chris Larsen, a billionaire activist and cryptocurrency executive who partnered earlier this year with former head of the Sierra Club Michael Brune on a “climate change”-focused investment and philanthropy fund.

In a revelatory conversation that took place at the Prelude Climate Summit in May, Larsen and Brune discussed the devious plot to manipulate the right into getting onside with the climate agenda — partly by adjusting their rhetoric to conform with rightist talking points; by leveraging existing, ostensibly conservative-leaning organizations; and by attacking conservative opponents from the right.

When asked about Roy’s race, Larsen boasted that his fellow travelers torpedoed the congressman’s polling numbers with “aggressive ads,” adding, “This is political warfare.”

Although Larsen and Brune acknowledged that Middleton was not “good on climate,” Larsen said the point of this particular sabotage exercise was “to make an example of” Roy.

Rep. Roy could not immediately be reached for comment.

Given general elections in various red regions are no longer competitive for Democrats thanks to successful Republican redistricting initiatives, Larsen indicated that their next play is to back Trojan-horse candidates in GOP races.

“There’s going to be more and more districts as we all know that just aren’t competitive unless you just say, ‘Okay, well I’m just going to be playing in Republican primaries,'” Larsen said. “There’s gonna be, like, a Chip Roy, and there’s gonna maybe be a Romney-type person, right? Let’s get behind the person who’s all-in for low-cost energy of all kinds.”

Like Larsen and Brune, the Invest in Tomorrow Coalition PAC made abundantly clear that Roy wouldn’t be the last lawmaker targeted for daring to end the gravy train to climate elites.

“ITC PAC will spend millions going after enemies of American clean energy, and electing champions who know that American energy dominance means an all-of-the-above approach to energy that includes solar and other renewables,” the group said. “Every politician in America should be on notice: Attack clean energy, and we’ll end your career just like Chip Roy’s.”

Tom Matzzie — CEO of solar company CleanChoice Energy, executive chair of the PAC, and a donor to Democrat Mallory McMorrow’s U.S. Senate campaign — told Politico, “The goal here is, at the end of this election year, members of Congress, senators, governors, others, remember that if you act viciously against the industry, that there could be a couple million dollars dropped into your next race, and that could threaten your political future.”

The RAIR Foundation identified Republican Reps. Andy Biggs (Ariz.), Ralph Norman (S.C.), and Nancy Mace (S.C.) as some of the “Green New Scam” industry’s next targets.

While poised to hammer Republicans who are committed to derailing the renewable energy industry’s taxpayer-funded gravy train, the PAC is also willing to spend a fortune backing defenders of wind and solar credits like Iowa Rep. Mariannette Miller-Meeks (R).

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Chip roy, Congress, Texas, Renewable, Energy, Pac, Sierra club, Climate alarmism, Green new scam, Politics

From sexting scandals to election fraud — if you’re a Democrat, ‘no one asks any questions’

Elections across the country this week have delivered no shortage of political drama, but two stories in particular are turning heads.

In Maine, several ex-girlfriends of Senate hopeful Graham Platner have hurled accusations of disturbing patterns of behavior at the Democrat — and his response hasn’t been promising.

Platner is also being accused of exchanging sexual text messages with women after he was married in 2023.

“So, Graham Platner, looking to move on from a week of controversy after telling supporters that his past had been weaponized,” BlazeTV host Stu Burguiere tells co-host Dave Landau. “That’s what happens, Dave. When you do something horrible and people catch you, that means they’re weaponizing what you’ve done.”

“Well, of course, it’s not being held accountable for the things you’ve done in your past. It’s just weaponizing the things you’ve done against you,” Dave jokes.

“When you’re a Democrat and you’re in one of these controversies, you’re able to live like this. No one asks any questions. You don’t address it, and no one follows up. What a wonderful way to be,” Stu says.

But it’s not just the Maine Senate election that is mired in controversy.

The Los Angeles mayoral race has shifted significantly over the weekend, as candidate Nithya Raman has passed Spencer Pratt for second place and will now go to the runoff against mayor Karen Bass.

“So we will have Democrat versus Democrat at the end of all of this,” Stu says.

“Are you saying that a system designed to lock out Republicans is locking out a Republican?” Dave asks.

Stu points out that there’s clearly a “tiny bit of skepticism by most people on the right that this is actually real and not just out-and-out fraud.”

“Well, I think it’s also because the way that it seems that the voting system works is you have the maybe some older conservatives come in early, you see the numbers, and then at the last minute, like a big giant bag of letters to Santa in a courtroom, all of a sudden they all just appear for one person,” Dave jokes.

“And they’re not even for Karen Bass. They’re just for this other person to then beat Spencer Pratt to then push Karen Bass forward,” he adds.

Want more from Stu and Dave?

To enjoy more of Stu and Dave’s lethal blend of wit, humor, and insightful commentary subscribe to BlazeTV — the largest multi-platform network of voices who love America, defend the Constitution, and live the American dream.

Stu burguiere, Dave landau, Graham platner, Spencer pratt, Nithya raman, Karen bass, Los angeles, Mayor, Election, Senate, Maine, Democrat, Fraud, Stu and dave do america

Nancy Mace crashes and burns in South Carolina governor primary

Another congressional Republican who seems to have fallen out of favor with President Trump has suffered humiliating defeat in a primary.

Rep. Nancy Mace (R-S.C.) now joins the likes of Sen. Bill Cassidy (R-La.) and Rep. Thomas Massie (R-Ky.) in losing a primary battle in resounding fashion after failing to earn an endorsement from Trump. On Tuesday night, Mace finished a distant fifth place in the South Carolina Republican gubernatorial primary.

‘This isn’t the end of the fight. It’s just the end of this chapter.’

Before 9 p.m. ET, she had conceded defeat, posting a lengthy concession message on X. “Serving South Carolina has been the greatest honor of my life. Every vote I cast, every hearing I called, every fight I picked — it was always for you,” she began.

“Apparently, I chose wrong if the goal was winning an election. I’m at peace with that. Because when a candidate is OK with corruption and cover-ups — something is broken. That’s not a political opinion. That’s a moral emergency,” she continued.

“This isn’t the end of the fight. It’s just the end of this chapter,” she assured her supporters.

Before 7:30 the next morning, Mace appeared to be in light spirits once again, joking on X: “Enjoying my first cup of coffee since getting my ass kicked last night.”

RELATED: Democrat voters in Georgia want nothing to do with Trump-hating ex-Republican

Trump and Mace shaking hands. WIN MCNAMEE/POOL/AFP/Getty Images.

Always outspoken, Mace likely lost all hope of a Trump endorsement this year after she pushed for ever more Epstein disclosures, even as she thanked Trump for supporting the “survivors.”

Mace’s alliance with Trump has been precarious for years. In the 2022 Republican primary for Mace’s congressional seat, Trump endorsed a challenger and shifted his support to Mace only after she prevailed. Trump then endorsed Mace’s re-election bid in 2024.

Now that she is leaving Congress, Mace says she plans to return “to the private sector … as the Founders intended,” signaling that she may have closed the door on her political aspirations for good.

Trump, meanwhile, can claim victory in her disastrous gubernatorial bid. The candidate he endorsed, Lieutenant Governor Pamela Evette, led the pack, collecting 28.9% of the vote.

Second-place finisher Attorney General Alan Wilson received 26.2%. Evette and Wilson now head for a runoff election scheduled for June 23.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Bill cassidy, Endorsement, Nancy mace, Thomas massie, Politics, South carolina

Teacher accused of sexually assaulting student; court docs say she texted boy: ‘I won’t do well in jail … I’m too pretty’

A former teacher from Georgia is accused of sexually assaulting a student and attempting to convince the teen to run away to Mexico with her after investigators uncovered nearly 20,000 damning text messages between the pair, according to multiple reports.

The Roswell Police Department said in a statement that 55-year-old Amanda Katz was arrested on June 2. Bond was set at $25,000, according to jail records.

‘I can’t stop looking at you and certainly keep my hands to myself.’

WAGA-TV reported that Katz was charged with improper sexual contact by an employee or agent.

The charge carries a maximum sentence of 20 years in prison, according to the New York Post.

Katz had been a teacher and administrative assistant at Roswell High School.

WXIA-TV obtained the arrest warrant saying Katz was a teacher of the alleged victim before she transitioned into an administrative role at Roswell High School.

Police said Katz sexually assaulted a 16-year-old student during multiple off-campus encounters between December 2025 and February 2026.

Katz resigned during the middle of the police investigation, police said.

Fulton County Schools confirmed the district no longer employs her.

Citing the affidavit, the Post reported that the alleged victim told police he had unprotected sex several times with Katz in her home and the backseat of her Jaguar car.

“During a forensic interview, the teen told investigators Katz encouraged him to transfer from Roswell High School to another high school that would allow him to graduate sooner,” WXIA reported.

The student said Katz told him she was “literally scared s**tless” about the prospect of getting caught with the boy and going to prison, the affidavit said.

WXIA reported that Katz was “considered a trusted family friend” of the alleged victim, and she even tutored the boy’s younger sibling. The affidavit said Katz bought gifts for the alleged victim’s siblings, including jewelry and a Nintendo Switch.

The teen’s mother grew suspicious of the alleged illicit relationship when Katz invited the boy and his family to a cabin in the north Georgia mountains during Valentine’s Day weekend, the arrest warrant stated.

WSB-TV reported that the teen’s mother said she noticed how “comfortable” Katz and her son were.

The Atlanta Journal-Constitution obtained the arrest warrant saying the teen’s mother discovered text messages on her son’s cell phone and “knew there was something wrong.”

The warrant said when Katz returned to work after the trip, she told her co-workers that she was “in a manic state” and complained that her “boyfriend’s mother” said they couldn’t be in a relationship.

The warrant revealed that when a co-worker learned that Katz’s boyfriend was a student at the high school, the co-worker alerted authorities.

Detectives obtained the teen’s cell phone, which revealed Katz and the boy exchanged at least 19,585 text messages and made 591 calls, the warrant said.

“Throughout the lengthy text thread between [teen] and Amanda, they discussed their relationship and potential future lives together, how and why they need to keep their relationship secret, and multiple sexual interactions,” the affidavit said, according to People magazine.

The New York Post reported, “In other messages, she told the boy that sex was ‘fun,’ and that she would ‘walk away from everything’ to be with him.”

The warrant said Katz messaged the student, “I meant everything I said to you. I would give you everything I have. And I’m human. I know it sounds stupid, but you not being mine isn’t an option. I can’t do this.”

The alleged victim told investigators Katz wanted him to move into her home after he graduated from high school, police said.

“[He] said he had mixed feelings about leaving his family and that Amanda wanted to go further with their relationship, move in together, and to commit to each other. … [He] said Amanda offered to take care of him (financially and provide a place to stay, etc.),” the affidavit read.

Katz also wanted the student to move to Mexico with her, police said.

“[He] also said that Amanda had told him that she wanted to move to Mexico with him, but he did not want to do that, nor did he understand why she would want to go there,” the affidavit revealed.

According to the affidavit, “Amanda also admitted that her actions were inappropriate and that she could be arrested if detected.”

“I have done a lot of stupid things. A lot. But this is top level,” Katz wrote, according to the affidavit.

“I am crazy about you. I can’t be around you,” Katz said to the teen, the affidavit said.

The affidavit said Katz texted the teen, “I can’t stop looking at you and certainly keep my hands to myself. Just telling you this can get me fired. … I need to leave Roswell. … Please delete this entire thread.”

“I was truly happy for the first time in a long time. I want you to know that. But this is killing me. This conversation can’t happen. Everything about us has to be deleted,” read a text message Katz sent to the teen on Dec. 29, 2025, WSB reported.

“We are lying to everyone. For what? How long? I don’t want to go to jail … I won’t do well in jail … I’m too pretty,” Katz wrote to the teen, the warrant stated.

The arrest warrant said Katz told the teen, “I loved you. And this is the most f**ked up and scary thing that has ever happened to me. You f**king broke my heart. … This is probably the worst thing anyone has ever done to me. You abandoned me.”

The Roswell Police Department and Fulton County Schools did not immediately respond to Blaze News‘ request for comment.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

Sexual assault, Amanda katz, Teacher arrested, Teacher student sex scandal, Teacher sex scandal, Bad teacher, Child sex crimes, Georgia, Crime

African suspected of trying to cut white Briton’s head off identified — while police fret about online critics

The Sudanese asylum-seeker arrested for the horrific attempted beheading that took place in Northern Ireland on Monday night appeared in court on Wednesday, where he declined to enter a plea.

In addition to being identified, the African has been slapped with additional criminal charges after the brutal attack he is accused of committing prompted a fiery night of rioting in Belfast as well as demands for transparency and a withdrawal from the EU Migration Pact from rightist lawmakers.

‘We will be going after them.’

Now that the liberal establishment has pivoted from feigning horror over the attempted beheading to expressing outrage over the backlash, police are threatening to arrest online influencers who raised the alarm about the incident.

Quick background

A black male was caught on camera sitting atop a bloodied white male in the middle of a north Belfast street, shouting something in a foreign tongue, then carving with a knife into the victim’s face and neck.

The attack was interrupted by a Good Samaritan armed with a wooden hurl stick who gave the attacker a good thwacking. Another two men rushed in to help — one attempting to pull the victim to safety and the other giving a few well-placed kicks to the aggressor’s head.

The attacker, who was initially identified by a police as Somali but later confirmed to be a Sudanese national, was arrested on suspicion of attempted murder.

RELATED: Sudanese national suspect attempts to behead UK citizen — but police beg public not to share images

L-R: Charles McQuillan/Getty Images; Christopher Furlong/Getty Images

The victim, who has been identified as Stephen Ogilvie and a British citizen, was taken to the hospital in serious condition with grievous injuries to his face, neck, and back.

Suspect identified

Gavin Robinson, a member of the British Parliament for East Belfast, stated on Tuesday that the Sudanese suspect was living in the U.K. under a five-year visa.

Police subsequently confirmed that the suspect, 30-year-old Hadi Alodid, entered Northern Ireland from the Republic of Ireland in 2023, applied for asylum, and was granted leave to remain in the country until 2028.

The Telegraph reported that the suspect had “used a loophole” in the British asylum system — traveling from Sudan to Paris and then to Dublin, before taking a bus to Belfast and then claiming asylum.

In addition to the original charge of attempted murder, Alodid has been charged with possessing a knife in a public space and threatening to kill a woman who works as a radiographer for the National Health Service.

Alodid appeared at Laganside magistrate’s court on Wednesday, where he communicated via an Arabic interpreter. The stabbing suspect — who allegedly left Ogilvie with no left eye, a damaged right eye, and deep cuts on his face and back — refused legal representation and declined to respond to the charges.

Alodid was denied bail at the urging of the Police Service of Northern Ireland.

Police told the court that the African’s release posed a threat of further offenses and a flight risk and could lead to “significant public disorder,” reported the BBC.

The suspect’s next court date is July 8.

Failed containment

The PSNI implored the general public on Tuesday not to share footage of the horrific attack, but the British public evidently had other ideas.

To the great chagrin not only of police but of those leftist lawmakers who expressed concerns over the inevitable political fallout, the video — yet another damning reminder of the isles’ disastrous immigration policies and failed dogma of multiculturalism — went viral with the help of remigration activist Tommy Robinson and others.

Belfast was subsequently rocked by protests and, on Tuesday evening, riots in which homes, cars, and a bus were torched.

Some of the hundreds of black-clad young men who roamed the streets of the capital city on Tuesday reportedly shouted, “Foreigners out!” and pelted asylum-seeker housing with rocks.

‘F**k ’em.’

Assistant Chief Constable Ryan Henderson of the PSNI said in a statement, “Sporadic pockets of disorder have broken out in a number of locations across Northern Ireland this evening, including incidents in which a number of vehicles have been set on fire.”

Prime Minister Keir Starmer and various other lawmakers condemned the riots — in many cases more forcefully than they condemned the attempted beheading.

“The scenes in Belfast last night were shocking and completely unacceptable,” Starmer stated on Wednesday morning. “There is no justification for the violence and disorder that we saw threatening our communities, nor for those who encouraged it, online or elsewhere. It is clear that people were targeted last night because of their background and I will not tolerate it.”

Police have arrested several alleged rioters and are threatening to arrest online influencers over their provocative commentary regarding the attempted beheading.

“It’s very easy, these days especially, to look online and be persuaded, by people who know nothing about Northern Ireland, know nothing about the communities in Northern Ireland, know nothing about the history of Northern Ireland, to take actions that they otherwise would not take,” said PSNI Chief Constable Jon Boutcher. “Stop looking at this nonsense. Stop listening to these idiots. We will be going after them for the incitement that they’ve been doing.”

“I’m not talking about individuals in this press conference, but people will know who were online last night and inciting this behavior. They will know what they were doing. We will be going after them,” added Boutcher.

Despite this latest threat of a crackdown over online speech, Elon Musk, Tommy Robinson, and Restore Britain leader Rupert Lowe have not rounded their critiques or softened their rhetoric.

Robinson, for instance, wrote, “Stop importing rapists, murderers, and sex pests from savage third world countries who put young girls [sic] lives at risk. Once you have advocated for that, and the removal of unwanted illegal migrants from communities who never asked for or wanted them, then you can take the high road. Until then, keep your mouth shut.”

Lowe wrote early Wednesday, “Millions must go,” and “the Belfast victim has lost his left eye and has severe damage to his right eye. Hacked at the neck, with his eyes gouged. Men who inflict this brutal evil on others do not deserve to live.”

Musk shared a post rejecting the calls for calm, then tweeted, “F**k ’em.”

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

African, Sudan, Beheading, Northern ireland, Ireland, United kingdom, Uk, Britain, Racism, Anti-white, Leftism, Terrorism, Crime, Replacement, Asylum, Immigration, Politics

‘You monetized his death’: Allie Beth Stuckey calls out YouTuber who turned aborted baby with Down syndrome into content

Popular YouTuber Jesse Ridgway, who goes by “McJuggerNuggets,” set the internet on fire last week when he used the abortion of his unborn child with Down syndrome to create content for his audience.

“My wife and I made the very difficult decision to terminate the pregnancy due to Trisomy 21,” Ridgway wrote in a post on X. “The choice was not made lightly.”

“She underwent the procedure earlier this week and is on the mend. Thankfully, everything went smoothly, but emotionally we are drained. Trisomy 21, also known as Down Syndrome, is caused by an extra chromosome. It is caused by an error in cell division, like a glitch. The odds of a baby having it is 1 in 1000,” he added.

The couple has been documenting their pregnancy journey on their YouTube channel, where they’ve been recording their reactions to test results.

“You not only monetized your baby’s little life, but then you monetized his death. And not just his death, but also his murder. And then you want people to feel sympathetic toward you,” BlazeTV host Allie Beth Stuckey comments.

“This is morally chilling that you are admitting and trying to euphemize euthanizing a baby,” she continues, pointing out that Ridgway is apparently not actually “without compassion for vulnerable entities.”

Earlier in May, Ridgway celebrated the sixth birthday of his dog, who was diagnosed with stage 4 kidney disease the year prior, explaining that she is in the “0.001% of superhero dogs that continue living with no kidneys.”

“So that life was worth sacrificing for. His dog was worth paying lots and lots of money for, doing everything you could to keep this dog alive. Even though your dog has special needs, will not live a very long time,” Stuckey says.

“That dog apparently was more worthy of life than their living child,” she adds.

Want more from Allie Beth Stuckey?

To enjoy more of Allie’s upbeat and in-depth coverage of culture, news, and theology from a Christian, conservative perspective, subscribe to BlazeTV — the largest multi-platform network of voices who love America, defend the Constitution, and live the American dream.

Allie beth stuckey, Jesse ridgway, Down syndrome, Abortion, Trisomy 21, The blaze, Relatable, Relatable with allie beth stuckey

Spencer Pratt 2.0? Actor Michael Rapaport eyes run against NYC Mayor Mamdani

From Spencer Pratt to Mayor Michael Rapaport?

All eyes have been on Spencer Pratt, the reality show alum vying to wrest the City of Angels from Mayor Karen Bass.

While Pratt promoted his family-man brand, Rapaport lives for the social media scrum.

Pratt’s insurgent campaign was felt from coast to coast. Now, as L.A.’s curious voting system seems to have sent him to a third-place finish, another actor turned candidate could take his place.

Did Pratt walk so Michael Rapaport could run?

‘Soft launch’

Rapaport is a familiar face from dozens of movies and TV shows since his 1992 film debut in “Zebrahead.” He recently joined Peacock’s “The Traitors,” a reality-show affair hosted by Alan Cumming. His brash persona proved a snug fit for the series, alienating some while bringing fresh friction to the game.

And, as he told the Hollywood Reporter in January, the show was part of his “soft launch” to unseat New York City Mayor Zohran Mamdani.

I think that if you can have a mayor of New York who is a failed rapper, a failed actor, a failed music supervisor and who’s rapped and said so many regrettable things that he did … if nothing else, I have shown once again, especially on “Traitors,” that I am what you see and you’ll get an honest mayor.

Pratt didn’t lean on MAGA messaging or GOP-friendly talking points in his campaign. He played the outsider, a man motivated by losing his home in the Palisades fires and demanding that the person who let it happen be held accountable.

For Rapaport, Mamdani’s socialist policies and perceived animosity toward Jewish New Yorkers sparked his campaign, not any Republican fervor.

Accidental politicians

Call them accidental politicians. The facts on the ground made them do it. Rapaport explained his change of heart to Fox News.

“I never thought that I would even consider running for mayor of New York City, and I will do it with the best intentions.”

Rapaport leans to the left, but he has defied some of his party’s groupthink, particularly when it comes to his strong support of Israel.

Pratt and Rapaport share a grasp not just of social media but of media training in general. They have been around cameras for years, aware of the power video brings and how to weaponize it for a cause.

We’ve seen Pratt leverage those viral campaign videos, playing the frazzled Everyman eager to save his hometown. Rapaport, a trained comic in addition to his acting experience, could do the same.

Rapaport has some advantages over Pratt. He announced his campaign years before any voting happens, as opposed to Pratt’s abrupt decision. That gives Rapaport time to build his base, criticize Mamdani in real time, and let New Yorkers see what a democratic socialist can do to the Big Apple.

Rapaport is betting they won’t like the results.

RELATED: Trump DOJ opens multiple investigations into possible election fraud in California

L-R: SAUL LOEB/AFP/Getty Images; ROBYN BECK/AFP/Getty Images

Sharp elbows

Plus, Rapaport isn’t merely a reality show villain like Pratt, with all the baggage that entails. He has delivered memorable performances on FX’s “Justified” and Netflix’s “Atypical,” plus classic films like “Beautiful Girls,” “True Romance,” and “Cop Land.”

The veteran actor recently segued back to comedy, appearing in clubs across the country with a genuinely funny set built around his garrulous persona.

The downsides for the New York native, beyond the fear that he’s another actor playing the part of political savior? Rapaport throws plenty of sharp elbows on social media and podcasts. He famously teed off on President Donald Trump a few years ago, a potential boost to his New York candidacy.

But he softened that stance considerably post-October 7, re-evaluating the president’s policies and the lies spread in the media. That speaks to his maturation, but it might not play well in a cobalt blue city.

Relishing a fight

While Pratt promoted his family-man brand, Rapaport lives for the social media scrum. He’s naturally combative, willing to muck it up about sports, culture, and politics on any platform possible.

His “I Am Rapaport: Stereo Podcast” lets him weigh in on the New York Knicks, free speech, and much more. Here’s betting Team Mamdani will be combing through past episodes for potentially damaging material.

And they just might find some.

Pratt proved competitive in his upstart campaign, and even if the vote totals keep him in third place, he still gave the Democratic establishment a major scare.

Could Rapaport learn from Pratt’s bold run and write his own Hollywood ending?

Palisades fires, Spencer pratt, Zohran mamdani, New york city mayor, Michael rapaport, Lifestyle

From ancient rituals to modern shelves: Cedarwood’s quiet evolution

(NaturalNews) Cedarwood essential oil, especially from the Cedrus atlantica tree, has been used for thousands of years. Ancient Egyptians used it for medicine, …

“Breaking the Chains” on BrightU: How food forests are defeating the globalist depopulation agenda

(NaturalNews) On Day 7 of “Breaking the Chains: Decentralize Your Life” rerun, Rob and Jim Gale talk about former Pentagon security leader Christopher Allison, …

Dangerous FDA-Approved Sunscreen Chemicals Found in Bloodstream After Single Application, Study Says

(NaturalNews) A randomized clinical trial published in the Journal of the American Medical Association (JAMA) in January 2020 found that six active ingredients comm…

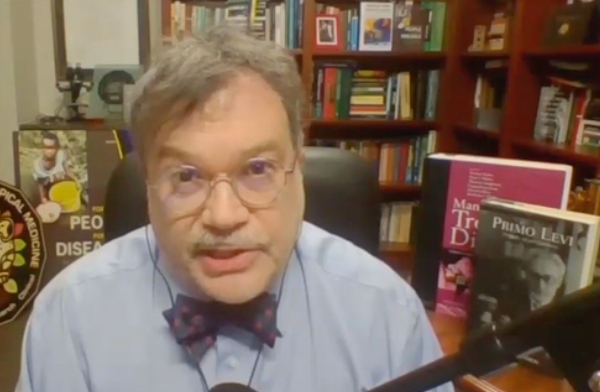

Vaccine Shill Peter Hotez Named in Sexual Harassment, Wrongful Termination Lawsuit

(NaturalNews) Annette Lee, a scientist and dean at Northwell Health’s Elmezzi Graduate School of Molecular Medicine, filed a federal wrongful termination lawsuit in…

CNN Report: Israel Deployed Special Forces to Azerbaijan, UAE, Iraq and Somaliland During Iran Conflict

(NaturalNews) Israel deployed special forces to Azerbaijan, the United Arab Emirates, Iraq and Somaliland during its war with Iran, according to a CNN report publis…

Russia Cuts Oil Exports Amid Ukrainian Drone Strikes and Domestic Shortages

(NaturalNews) Russia is reducing crude oil exports from its western ports to approximately 1.7 million barrels per day (bpd) in June, down from an estimated 2.5 mil…